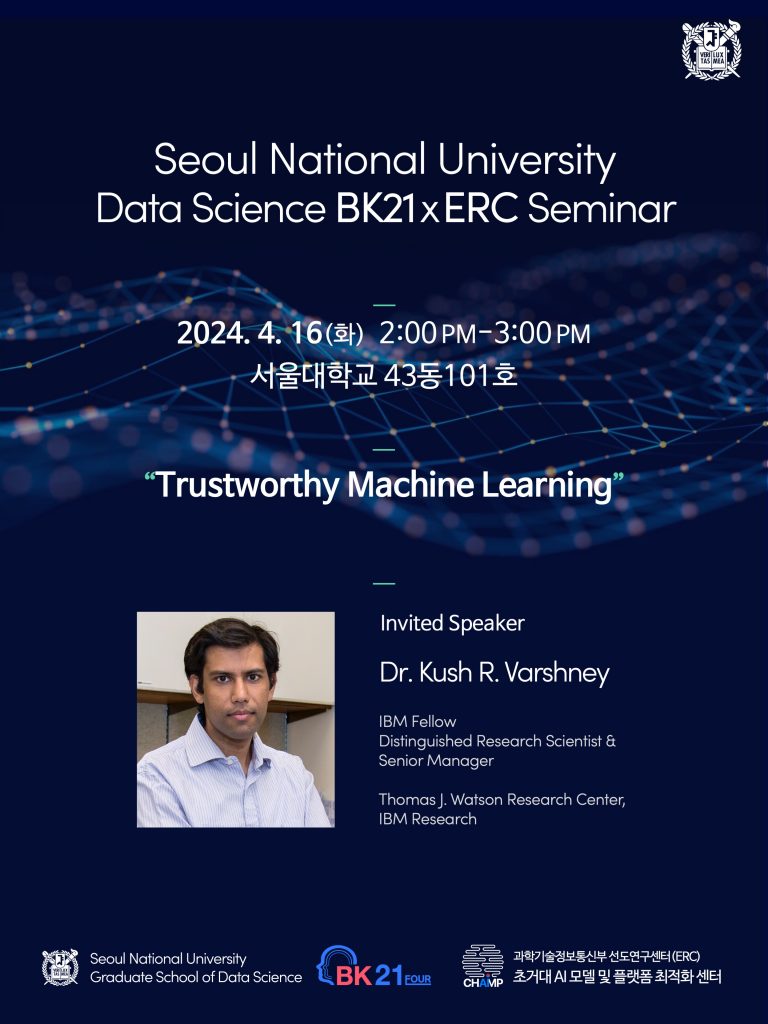

[BK21xERC 세미나] Dr. Kush R. Varshney (IBM Research), 4월16일(화) 오후 2시

April 10, 2024

일시: 2024년 4월 16일 화요일, 오후 2:00-3:00

장소: 서울대학교 43동 101호

Speaker: Dr. Kush R. Varshney (IBM Research, Thomas J. Watson Research Center)

Title: Trustworthy Machine Learning

Abstract:

We will discuss the concepts for developing accurate, fair, robust, explainable, transparent, inclusive, empowering, and beneficial machine learning systems. Accuracy is not enough when you’re developing machine learning systems for consequential application domains. You also need to make sure that your models are fair, have not been tampered with, will not fall apart in different conditions, and can be understood by people. Your design and development process has to be transparent and inclusive. You don’t want the systems you create to be harmful, but to help people flourish in ways they consent to. All of these considerations beyond accuracy that make machine learning safe, responsible, and worthy of our trust have been described by many experts as the biggest challenge of the next five years. We will consider these concepts in terms of both traditional machine learning models and foundation models.

Bio:

Kush R. Varshney received the B.S. degree (magna cum laude) in electrical and computer engineering with honors from Cornell University, Ithaca, New York, in 2004. He received the S.M. degree in 2006 and the Ph.D. degree in 2010, both in electrical engineering and computer science at the Massachusetts Institute of Technology (MIT), Cambridge. While at MIT, he was a National Science Foundation Graduate Research Fellow.

Dr. Varshney is an IBM Fellow, based at the Thomas J. Watson Research Center, Yorktown Heights, NY, where he heads the Trustworthy Machine Intelligence and Human-Centered AI teams. He was a visiting scientist at IBM Research – Africa, Nairobi, Kenya in 2019. He applies data science and predictive analytics to human capital management, healthcare, olfaction, computational creativity, public affairs, international development, and algorithmic fairness, which has led to the Extraordinary IBM Research Technical Accomplishment for contributions to workforce innovation and enterprise transformation, IBM Corporate Technical Awards for Trustworthy AI and for AI-Powered Employee Journey, and the IEEE Signal Processing Society’s 2023 Industrial Innovation Award.

He and his team created several well-known open-source toolkits, including AI Fairness 360, AI Explainability 360, Uncertainty Quantification 360, and AI FactSheets 360. AI Fairness 360 has been recognized by the Harvard Kennedy School’s Belfer Center as a tech spotlight runner-up and by the Falling Walls Science Symposium as a winning science and innovation management breakthrough.

He conducts academic research on the theory and methods of trustworthy machine learning. His work has been recognized through paper awards at the Fusion 2009, SOLI 2013, KDD 2014, and SDM 2015 conferences and the 2019 Computing Community Consortium / Schmidt Futures Computer Science for Social Good White Paper Competition. He independently-published a book entitled ‘Trustworthy Machine Learning’ in 2022, available at http://www.trustworthymachinelearning.com. He is a fellow of the IEEE.